- About

- Members

- Sponsors

- Subcommittees

- Technical Documents

- News

- Events

- Spotlight ATSC 3.0

- Contact Us

- Member Login

- Member Meetings

- Advanced Search

Search Site

Member Links

- About

- Members

- Sponsors

- Subcommittees

- Technical Documents

- News

- Events

- Spotlight ATSC 3.0

- Contact Us

- Member Login

- Member Meetings

- Advanced Search

NAB Explores ATSC 3.0 App Development Apps will unlock interactive features of next-gen platform

Posted on July 18, 2016 in ATSC News

By Deborah D. McAdams TV Technology

Coding an app for the next-gen TV broadcasting platform is less complicated than conjuring one. That was the upshot of a presentation on authoring applications for the ATSC 3.0 platform, from the National Association of Broadcasters’ Pilot division.

Coding an app for the next-gen TV broadcasting platform is less complicated than conjuring one. That was the upshot of a presentation on authoring applications for the ATSC 3.0 platform, from the National Association of Broadcasters’ Pilot division.

“The possibilities are rather endless because you have a very robust environment,” said Azita Manson, principal and founder of OpenZNet, a consultancy that assisted CableLabs with its OpenCable protocols and specs.

“Any HTML developer can pick up the API and run with it.”

Apps are the key to unlocking the creative and interactive functions of ATSC 3.0, the next-gen broadcast transmission standard being hammered out at the Advanced Television Systems Committee. Just as the current ATSC standard was designed from the one-way analog perspective, 3.0 is being designed to reflect the internet and is therefore based on HTML5. The use of HTML5 opens the broadcast platform to the existing developer community for the first time.

There is no proverbial “killer app” for 3.0 as of yet, said So Vang, the NAB’s vice president of advanced technology. He said 3.0 is a “great platform to figure out what those killer apps might be,” and demoed a few to show “what’s possible” with 3.0. He brought up a menu bar within a hockey game with separate app tabs, including one for weather, which picture-in-pictured the program and displayed local weather. Another was a sports stats app related to the program, and yet another leveraged local content search. This last news-on-demand app was developed with News Press & Gazette.

Manson described a “broadcaster application” as “a collection of web pages,” where each page is either static or dynamically built, and each can be delivered over broadband or broadcast. The use of HTML5, JavaScript and CSS animation mean broadcaster applications can be leveraged on a “normal browser,” she said.

With ATSC 3.0, broadcasters can use a combination of both broadcasting and/or broadband distribution for “hybrid” delivery. In a hybrid environment, a broadcaster application distinguishes itself from other applications by its ability to support localized interactivity with no broadband connectivity, Manson said.

An example of leveraging a hybrid over-the-air environment would be to deliver a major live event in English and simultaneous non-English-language streams via broadband.

With dynamic apps, she said broadcasters can load and reload, and change things as they are happening, based on broadband availability, time of day, program start or end times, ad start and stop times, location and profile information such as gender or age; and data collection.

Some ideas for broadcaster apps include:

– Personalized interactive applications that adapt to viewer behavior while dynamically changing the web pages or video streams sent over broadband, accordingly.

– Personalized content viewing, i.e., enabling different camera perspectives.

– Program-based interactive applications, e.g., enhancing the program experience with additional metadata or alternative video streams a la click buying.

– Targeting and localization of ads.

– Subscription-based paid services, enhanced applications or additional content.

– Social media-based interactive applications.

Manson noted that the broadcaster app framework will be able to connect to “Facebook, Snapchat, whatever. It has the unique ability to combine the mediums” of broadcasting and broadband. In terms of lessons learned from executing the broadcaster app development workflow, Manson mentioned the limited horsepower in an embedded environment, and also the limited bandwidth of the 6 MHz broadcast license.

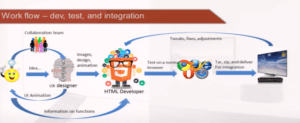

Developing a broadcaster app is a fairly straight-forward endeavor in HTML coding, Manson said. More challenging, she said, was understanding what the broadcast team was envisioning.

Will Law, chief architect of Media Engineering for Akamai, took up bitrate. He said any single bitrate is by definition wrong for 99 percent of users.

“SBR doesn’t work well when we’re looking at broadband delivery. Bandwidth fluctuates over time,” he said. It hops through several servers before reaching a home, for example, and anytime throughput exceeds bandwidth, there’s buffering.

On the player side, he said traditional over-the-top media players use Flash, which is still dominant on desktop and laptops. Mobile devices without Flash support has “fractured the player space,” he said.

Law made a case for single-player environment, which can be content protected. He said the Consumer Technology Association had launched the Web Application Video Ecosystem project to address media player issues. WAVE members include Akamai, Apple, LG, Intel, Fraunhofer, Facebook, Dolby, Sony, Samsung, Microsoft, MLB Advanced Media, Comcast/NBCU, Sky and others. The idea is to simplify the development process for broadband media platforms such as those accommodating 4K content, for example.

“A complete, normative streaming player environment specification and interoperability tests are essential for quickly deploying Internet-delivered 4K UltraHD content in quantity, and to enable the broadest deployment of Full HD services,” the WAVE website states.

Law described how internet bandwidth would affect 4K delivery. He said the average throughput across Akamai’s servers the United States is 14 Mbps.

“That’s insufficient for 4K, you need 15 to 16,” he said. However, about 18 to 22 percent of the U.S. population does have sufficient bandwidth for a 16 Mbps 4K stream. “The beauty of the internet is that we don’t have the infrastructure problem in building out. If there’s only five people in one city and a million in another, the internet can go and find them.”

What can broadcasters do today to anticipate ATSC 3.0 app development? Manson said the closer they keep to WC3, the more rapidly developers can respond. Song encouraged broadcasters to do more experimentation on the digital side. “That content will be able to be repurposed on the broadcast platform,” he said.

Law said standards are the foundation for interoperability and robustness, agreed on hewing to WC3, and advocated for the use of MPEG-DASH.

Reprinted with permission from TV Technology.

Posted in ATSC News

News Categories

News Archives

Subscribe

Subscribe to The Standard, our monthly newsletter. Learn More

Join ATSC

ATSC is a membership organization with both voting and observer categories. Voting members include corporations, nonprofit organizations, and government entities, and they participate actively in the work of ATSC. Observers are individuals or entities not eligible to be a voting member.

Subscribe to our Newsletter

Subscribe to The Standard, our monthly newsletter, to stay up-to-date with ATSC news and events around the world.

Site Links

Contact Us

ATSC

1300 I Street NW, Suite 400E

Washington, DC 20005 USA

Do you have questions about ATSC?

About ATSC

ATSC, the Broadcast Standards Association, is an international, non-profit organization developing voluntary standards and recommended practices for digital terrestrial broadcasting. Serving as an essential force in the broadcasting industry, ATSC guides the seamless integration of broadcast and telecom standards to drive the industry forward. Currently, the ATSC 3.0 Standard is providing the best possible solution for expanding the potential of the broadcast spectrum beyond its traditional application to meet changing needs. From conventional television to innovative digital data services, ATSC has one clear goal: to empower the broadcasting ecosystem like never before.

© 2026 ATSC